Latency and Throughput

The difference between latency and throughput. Plus, how to optimize them in system design.

Hi Friends,

Welcome to the 77th issue of the Polymathic Engineer newsletter.

This week, we discuss two critical performance metrics in systems design and computer networking: latency and throughput.

By the end, you'll better understand these critical metrics and how to optimize them for better system performance.

The outline will be as follows:

Why latency and throughput are important

What is latency

What is throughput

Relationship between latency and throughput

Food for thought

Why latency and throughput are important

Latency and throughput are two measures that directly affect how well and quickly services and applications work.

These measures should always be considered when designing a system from scratch or optimizing the performance of an existing application since they affect the overall user experience.

Latency measures how long an operation takes to finish. It's the delay between a user's action and the system's reaction. Too high latency causes users to notice delays.

For example, high latency can result in lag in online gaming, making the game unplayable. In an e-commerce application, it can slow down transactions, which can cause customers to abandon their shopping carts.

On the other hand, throughput is how much data your system can handle in a certain amount of time. The system can't hold much data at once if throughput is low, leading to bottlenecks and slow performance.

For instance, in video streaming, low throughput can cause buffering and reduce the video quality, negatively impacting user satisfaction.

Many factors can affect a system's latency and throughput: network delay and bandwidth, request processing time, resource contention, the capacity to handle many requests simultaneously, and so on.

In the next section, we will focus on the aspects more related to networking.

Network latency

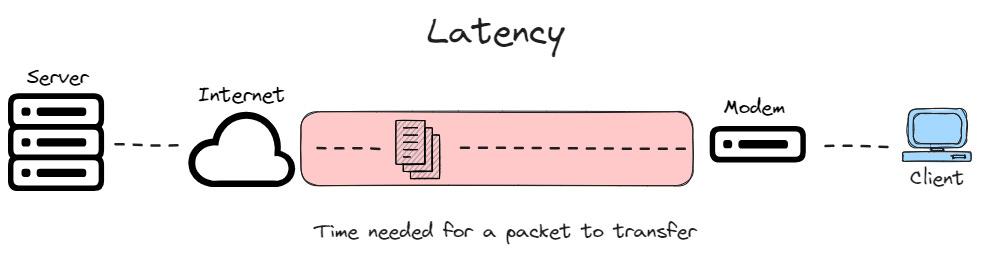

Network latency is the time that packets take to transfer over a network. It affects the delay users experience when sending or receiving data from the network.

Networks with a longer lag or delay have high latency, while those with fast response times have lower latency.

You can measure latency as a one-way trip from source to destination or a round trip.

The second method is more common and is measured using a ping. Most operating systems support this command. It transmits a small data packet and receives confirmation that it arrived, measuring the elapsed time.

The elapsed time or round-trip time (RTT) displays in milliseconds and gives you an idea of how long it takes for your network to transfer data.

Latency has several factors that contribute to it being high or low. Some important ones are:

Propagation Delay. If the destination servers are in a different geographical region from your device, the data has to travel further, which increases latency.

Network congestion. If a high volume of data is transmitted over a network, the likelihood of packet collisions and retransmissions increases, leading to higher latency.

Protocol efficiency. Different network protocols introduce varying amounts of overhead, which can affect latency. For example, TCP has more overhead than UDP due to its error-checking and acknowledgment processes. In addition, some networks may require additional protocols for security, which create further delays.

Network Throughput

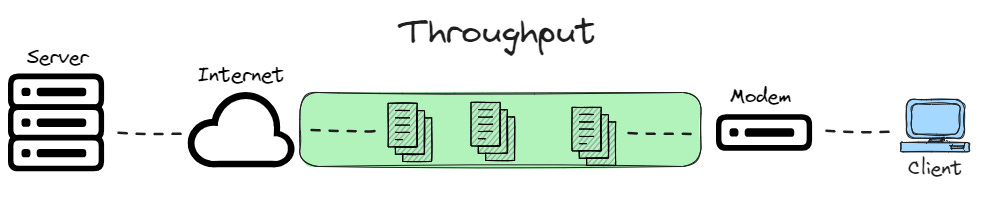

Network throughput is the number of packets that can be successfully transmitted over a network in a given time, and it affects the number of users that can access the network simultaneously.

You can measure throughput either manually or with network testing tools. A way to test it manually is to send a file and divide the file size by the time it takes to arrive.

However, many people use network testing tools, which report throughput alongside other factors like bandwidth and latency.

Network throughput is displayed in bits per second (bps), kilobytes per second (KBps), megabytes per second (MBps), and even gigabytes per second (GBps).

Several factors can impact network throughput:

Bandwidth. Bandwidth is the maximum rate data can be transmitted over a network. Higher bandwidth allows more data to be transferred at once, increasing throughput. However, bandwidth alone does not guarantee high throughput if other factors cause bottlenecks.

Processing power. Specific network devices have specialized hardware that improves processing performance, leading to higher throughput.

Packet loss. Packet loss can occur because of network congestion or faulty hardware. High packet loss requires more data to be retransmitted, reducing the throughput.

Network topology. A well-designed network provides multiple paths for data transmission, reducing traffic bottlenecks and increasing throughput.

Relationship between latency and throughput

As both latency and throughput impact the transmission of data packets, they are distinct but related metrics.

A network with high latency can have lower throughput, as data takes longer to transmit and arrive. Conversely, low throughput makes it seem like a network has high latency, as it takes longer for large data to arrive.

As they are closely linked, you must monitor latency and throughput to achieve high network performance.

You can improve latency by shortening the propagation between the source and destination and throughput by increasing the network bandwidth.

Since this is not always possible, here are some strategies to improve latency and throughput together:

Caching. Storing frequently accessed data closer to the user or application reduces latency since data is delivered much faster. In addition, it reduces the load on the original data source, which can handle more requests at once, improving throughput.

Transport protocols. Depending on the specific application, TCP or UDP may be the best choice to achieve an optimal balance between latency and throughput. For example, TCP is better for transferring data, while UDP is better for video streaming and gaming.

Quality of service. QoS configurations allow you to divide network traffic into specific categories and assign each category a priority level. If the configuration prioritizes some applications, they will experience lower latency than others. QoS configurations can also prioritize data by type, increasing throughput for certain users.

Food for thoughts

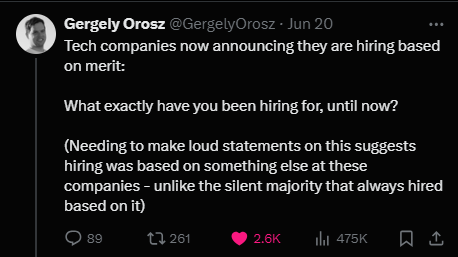

Hiring on merit should be the base and be compatible with diversity, equity, and inclusion considerations. Link

We sometimes forget that our job is a privilege. We are paid well for doing an addicting job, and we have weekends off. Many people do not have this luxury.

I learned the hard way that giving value is not enough if you don't make noise. It's a pity that many good people miss out on rewards because they are uncomfortable with this.

I feel like this post gives an overview of terms but misses giving an actionable point.

Response time, as an application would measure it, is a function of latency, throughput, protocol overhead, and server/application delay on the other side of the connection.

When examining why something is slow, a waterfall graph, like what you can find in Chome’s developer tools, is a great first step for figuring out which of the above factors is most influencing the delay.

If network latency is the cause of the issue, you need to tweak the components of that delay function to compensate. Caches or CDNs can directly reduce that latency, but if that isn’t possible, look for ways to reduce protocol overhead. If you’re using TLS 1.2, upgrade to 1.3. If you’re using HTTP1.2, upgrade to HTTP2. If you can make it happen in your enterprise network, upgrade to QUIC.

When talking about protocol overhead, sometimes the biggest overhead is not in the transport (TCP) or session (TLS) layers. A good example is file copies. NFS and SMB2 are common network file share protocols, and they’re both very chatty. If you’re transferring hundreds of individual files, these protocols negotiate for each file individually, which slows things down. The solution: zip up those files. TCP and SMB are really good at a small number of large files, so give them that.

Likewise with database queries, it’s often faster to batch things up. Use a stored procedure to get your processing done on the database itself. If you can’t do that, go ahead and retrieve 5k rows at a time. It’ll be faster on the network.